Ads are showing up in places they never used to. Inside AI chat responses. Embedded in search answer cards. Woven into AI-assisted results that users are increasingly turning to instead of traditional search pages. For brands running paid media, this shift isn’t something to bookmark for later. It’s happening now, and it’s changing how ad inventory gets bought, measured, and optimized.

The question most teams are actually wrestling with isn’t whether AI ad placements matter. It’s how to make them work. How do you get access? How do you know if they’re performing? How much do you spend to get a real read on the opportunity?

AI ad placements are no longer experimental. They’re part of the active paid media mix, and brands that figure out how to access, measure, and budget for them are going to have a real advantage over those still treating them like some untested side channel. This guide walks through each of those areas with enough detail to help you move forward without just guessing.

How Brands Can Access AI Ad Inventory

Not all AI ad inventory works the same way. Depending on where you want your ads to appear and how much control you want over placement, there are two main routes available to most advertisers right now: buying directly through AI-first platforms or tapping into AI surfaces through existing campaign types on networks like Google and Microsoft.

The difference matters because these routes come with different creative requirements, different transparency levels, and different cost structures.

Buying Directly vs. Through Existing Campaign Types

Direct buys on AI-first platforms give you a clearer view of where your budget is going. You know your spend is going specifically to AI surfaces, which makes it easier to look at performance in isolation. Platforms like Perplexity have started rolling out sponsored placements inside AI-generated answers, and Microsoft’s Copilot has built ad experiences into its AI-assisted search results. Buying directly through these platforms usually means working with their sales teams or managed service layers, at least for now, since self-serve access is still catching up in some cases.

The trade-off is that direct buys often come with tighter inventory, higher minimum spends, and more back-and-forth in negotiations. They’re better suited for brands that want to test AI placements as a distinct channel with isolated measurement.

On the other side, campaign types like Google’s Performance Max and the newer AI Max for Search campaigns let your existing creative surface across AI-powered experiences as part of a broader placement mix. You won’t always get full visibility into what percentage of your budget landed on an AI surface versus a traditional one, but you get the benefit of Google’s or Microsoft’s machine learning optimizing delivery across a much wider range of inventory. This works well when your goal is scale and you’re comfortable with a blended performance picture rather than platform-specific data.

Both routes have a genuine role to play depending on what you’re trying to accomplish. Direct buys make sense when you need clean test data or want to build a case for AI-specific investment. Existing campaign types make sense when you want broader reach without rebuilding your whole campaign structure.

Why Creative Flexibility Determines Your Access

A lot of brands underestimate this part: access to AI ad inventory isn’t just about budget or bidding. It’s heavily tied to how flexible your creative is.

AI ad placements are dynamic by nature. The platform takes your assets, things like headlines, descriptions, images, and product data, and assembles them based on a user’s specific query or conversational context. The more you try to control exactly how those assets get combined, the less eligible your ads become for high-quality AI surfaces.

Brands with pinned assets, rigid legal copy requirements, or strict compliance constraints will find their inventory access limited. If every headline has to appear in a fixed order, or if your legal team needs to approve every possible copy combination before anything goes live, you’re working against how AI ad serving actually functions.

That doesn’t mean abandoning brand standards. It means working within them more cleverly. Providing a wider set of approved headline and description variations, building modular copy that holds up in different combinations, and giving the platform more raw material to work with will expand your eligibility and give the system room to find what actually resonates.

—

—

How to Measure Performance Across AI Ad Placements

Measurement is where most brands get genuinely confused with AI placements. The instinct is to apply the same performance lens used for traditional PPC, meaning ROAS, CPA, and conversion volume. That instinct isn’t crazy, but it often leads teams to undervalue what AI surfaces are actually contributing.

Why Last-Click Attribution Undersells AI Surfaces

One of the most important things to understand about AI ad placements is how they interact with the consideration cycle. Traditional paid search catches people who are already in active decision mode. They type a query, they click, they convert or they don’t. The path is pretty linear.

AI placements work differently. When a user is inside an AI chat or reading an AI-generated answer, they’re often in a more exploratory mode. But here’s where attribution gets tricky: AI can compress that exploration dramatically. A user might go from genuinely discovering your brand inside an AI response to completing a purchase in under 30 minutes, simply because the AI surfaced the right product recommendation at the right moment in the conversation.

That speed makes AI placements a hybrid of brand media and performance media at the same time. They introduce your brand to a new audience, but they can also close that loop very quickly. Last-click attribution models frequently miss the introduction credit and only see the conversion, or they miss the conversion entirely if it happens through a touchpoint that falls outside the tracking window.

Relying only on last-click data gives you an incomplete picture. You might look at an AI placement campaign, see a CPA that looks high compared to your brand search campaigns, and decide to cut it, without realizing you just removed the channel that was feeding your brand search pipeline in the first place.

The Metrics That Actually Reflect AI Placement Value

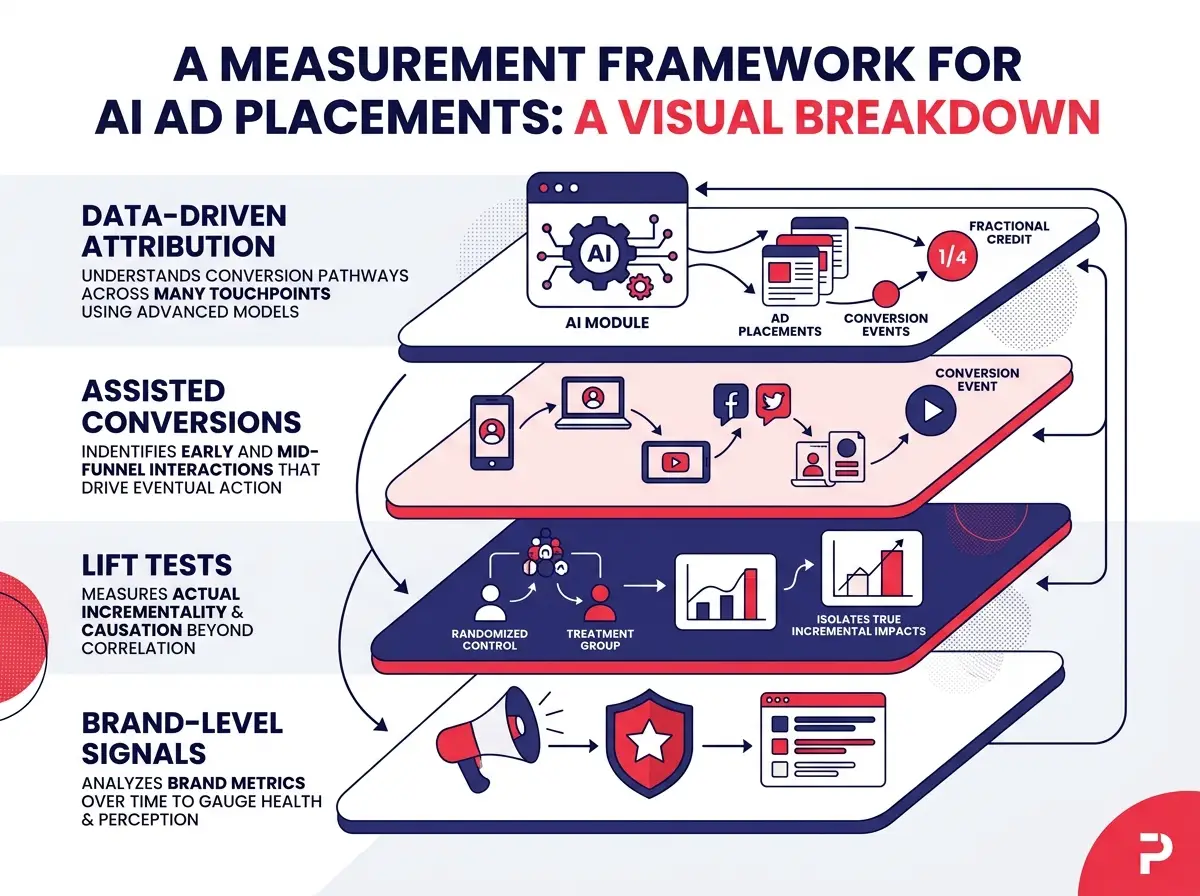

A more complete measurement approach for AI placements uses several layers working together rather than depending on a single number.

Data-driven attribution is the baseline. If you’re still using last-click, switching to data-driven attribution across your account is the first practical step that gives AI placements more appropriate credit for their role across the full conversion path.

Assisted conversions give you a fuller picture of how often an AI placement touchpoint appeared somewhere in a user’s journey before they eventually converted through another channel. This is especially useful during the early testing period when you’re trying to understand whether AI inventory is generating real downstream value.

Structured lift tests let you measure the incremental impact of your AI placements more rigorously. By holding out a portion of your audience from seeing AI ads and comparing their behavior against the exposed group, you build a cleaner case for the real impact these placements are generating beyond what attribution models can capture.

Brand-level signals round out the picture. Watch for movement in branded search volume, citation share in AI-generated answers (even organic ones), and shifts in direct traffic in the weeks following AI placement campaigns. These signals won’t show up in your ad platform dashboard, but they reflect the awareness and consideration lift that AI surfaces are generating.

PPC teams that build these measurement layers before scaling AI placement spend will be in a much stronger position to make confident optimization decisions rather than trying to read the tea leaves later.

—

—

Building a Budget for AI Ad Placements That Makes Sense

Once you have a clear sense of how to access AI inventory and how to measure it properly, the next challenge is deciding how much to spend and how to structure that spending in a way your team can actually defend internally. Budget conversations around newer channels always involve some uncertainty, but there are frameworks that make the decision feel less like guessing.

How AI Placement Pricing Actually Works

Pricing for AI ad placements isn’t uniform, and treating it like standard paid search CPC will lead to budget miscalculations. The dynamics vary quite a bit depending on whether you’re buying directly through an AI-first platform or accessing AI surfaces through a broader network campaign.

AI-first platforms like Perplexity tend to reflect tighter inventory because there are fewer total ad slots available within AI-generated answers than on a traditional results page. The user experience rules are also stricter. These platforms are protective of the quality of the AI responses they serve, which means ad frequency is lower and placement requirements are more demanding. That can translate to higher CPCs in certain categories, though it also means less competition in some cases where advertisers haven’t yet prioritized these platforms.

On the major ad networks, AI surfaces are part of a mixed inventory pool, which tends to moderate pricing but also means you have less visibility into what exactly you’re paying for. Performance Max campaigns, for example, optimize across all of Google’s inventory including AI placements, but the reported averages blend all of that together.

The most grounded approach to budget planning is to anchor your numbers to your specific category, not a general benchmark. Start with your current conversion rate and average order value or lead value. Back into what CPC range would still let you hit an acceptable target CPA. Then look at realistic click volumes needed to accumulate enough conversion data to make a statistically confident decision, which usually means at least 30 to 50 conversions per ad group or campaign variant. That gives you a minimum viable test budget rather than a number someone pulled from a general industry average.

Structuring a Test Budget Your Team Can Defend

The financial investment in AI placements gets most of the attention, but the time investment is just as real and just as important to account for when building your plan.

Creative for AI surfaces is more complex than standard paid search ads. Many AI ad formats include pre-click engagement options that let users expand product information, ask follow-up questions, or explore service details before ever leaving the AI environment and visiting your site. That means your creative strategy needs to account for copy variants that work at different stages of that interaction, structured product data that feeds AI-native formats correctly, and a content approach that serves someone who is still exploring rather than someone who is ready to click and buy.

When you’re presenting a test budget to leadership or a client, build in the cost of creative production and ongoing iteration alongside the media spend. A test that runs for six to eight weeks with a media budget of $5,000 to $10,000 per month in most mid-competition categories can generate enough data to make a meaningful call, but only if the creative is set up correctly from the start and the measurement framework is in place before the campaign goes live.

Plan for a structured performance review at the halfway point of your test, not just at the end. AI placements can show different performance patterns in the first few weeks compared to later in the test as the system learns, and catching that shift early lets you make smarter optimizations rather than waiting until the budget is gone.

Building a smarter PPC strategy with AI ad placements isn’t about betting on a trend. It’s about understanding a real shift in how paid media inventory works and putting your brand in position to take advantage of it with the right access, the right measurement, and a budget structure that gives the channel a fair chance to prove its value.