Imagine doing everything right for traditional SEO and still getting ignored by AI-powered search engines. Your page ranks on the first page, gets decent traffic, and covers the topic thoroughly. Yet when someone asks a related question in Google AI Overviews, Perplexity, or Microsoft Copilot, your content is nowhere in the cited sources. That gap between ranking and getting cited is exactly what Generative Engine Optimization is designed to close.

Here’s what trips most people up: there is no single “content quality score” secretly controlling which pages AI engines choose to cite. What actually exists is a system of signals, and once you understand that system, you can start improving your visibility in a way that actually sticks.

This guide breaks down how AI engines evaluate pages, which signals matter most for getting cited, and how to build a practical measurement plan to track real GEO progress over time.

What GEO actually is and why traditional SEO metrics fall short

Generative Engine Optimization vs. traditional SEO: a real distinction

Traditional SEO is about earning a high-ranking position on a results page. You optimize for keywords, build backlinks, improve page speed, and hope users click through. Success is measured in rankings and organic traffic.

Generative Engine Optimization works differently. The goal isn’t just to rank on a page, it’s to earn a citation inside an AI-generated answer. When someone asks Google AI Overviews a question, the model doesn’t just list links. It writes a response and selects a handful of sources to reference. Being one of those cited sources requires a fundamentally different mindset than chasing a number one ranking.

The reason traditional metrics fall short in GEO comes down to what AI engines actually care about. Position one on Google Search doesn’t automatically mean your page gets included in an AI Overview. AI systems weigh trust, clarity, verifiability, and helpfulness in ways that pure keyword optimization can’t reliably satisfy. A page stuffed with the right terms but lacking credible sourcing, clear authorship, or up-to-date facts is far less likely to get cited, regardless of where it ranks traditionally.

One clarification worth making: there is no public, holistic page quality score that governs organic rankings or AI citation selection. Google’s guidance focuses on helpful, people-first content and E-E-A-T principles rather than any single numeric metric. This is a common source of confusion, especially for those familiar with Google Ads’ Quality Score, which is an ads-only diagnostic and has nothing to do with organic search or AI answer inclusion.

How Google AI Overviews, Perplexity, and Copilot select sources

Different AI engines approach source selection in slightly different ways, but the pattern is consistent across all of them.

Google AI Overviews generates responses grounded in Search systems, and supporting links appear alongside the answer. Google’s own guidance makes clear that the same fundamentals driving organic Search, helpful content, clear entity signals, and technical eligibility, also support AI Overview inclusion.

Microsoft Copilot pulls from web search and presents linked citations alongside its responses, making the source transparent to the user. In enterprise contexts, admins can designate specific knowledge sources, and grounding safeguards are applied to keep responses accurate.

Perplexity behaves like a live answer engine, blending real-time web retrieval with model reasoning. Citations appear inline so users can verify claims directly. While Perplexity doesn’t publish a formal ranking policy, its behavior reflects a clear preference for verifiable, well-sourced content.

The common thread across all three: there is no AI inclusion switch you can flip. What raises your chances is being discoverable, trustworthy, entity-clear, and technically accessible. If a machine struggles to parse your page or a human struggles to verify its claims, your odds drop.

The signals that actually move the needle in GEO

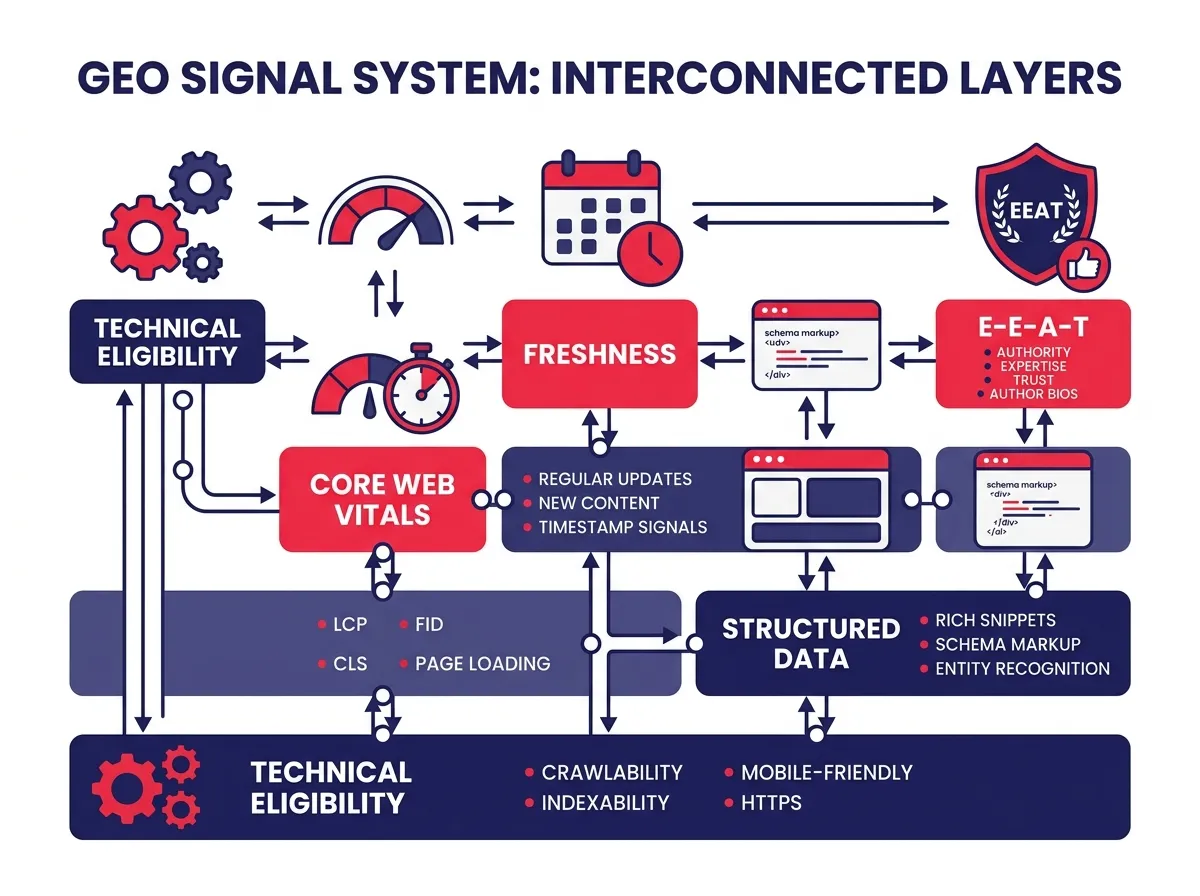

E-E-A-T, source-backed claims, and entity clarity

When AI engines decide which sources to cite, they’re essentially asking one question: can we trust this page enough to put our name behind it? The signals that answer that question fall into a few clear categories.

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trust. These aren’t abstract concepts. They show up in concrete page elements: author bios with real credentials, editorial policies that explain how content is reviewed, solid About and Contact pages, and citations that link to primary sources rather than secondary interpretations.

AI engines prefer sources that demonstrate a trustworthy editorial context. A page where the author is unnamed, sources are absent, and no organization stands behind the content gives an AI model very little to work with when deciding whether to cite it. Compare that to a page with a named expert, linked credentials, and citations pointing to original research. The second page is far easier for an AI system to trust and quote without surfacing inaccurate information.

Source-backed claims matter for the same reason. When your content links to original research, official documentation, or established standards bodies within the body of the text, AI engines can follow that chain of evidence. Verifiable claims are easier to summarize without distortion, which makes them more attractive to cite.

Entity clarity is a signal many content creators overlook entirely. Clear entity signals help AI models map your page to the right topics, organizations, and people. Adding structured data like Organization or ProfilePage schema, using consistent naming across your site and external profiles, and maintaining stable canonical URLs all make it easier for AI systems to understand who you are, what you cover, and why you’re relevant to a specific query.

Here’s a quick comparison of the signals and what they mean in practice:

Here’s a quick comparison of the signals and what they mean in practice:

| Signal | Why it matters for AI citations | Practical action |

|---|---|---|

| E-E-A-T and authorship | Builds the trust foundation AI engines rely on | Add author bios, editorial policies, source citations |

| Source-backed claims | Makes content easier to verify and summarize | Link to primary research and official documents |

| Structured data and entity clarity | Helps AI map content to the right topics and entities | Add schema markup, consistent sameAs links |

| Freshness and update cadence | Signals up-to-date accuracy for time-sensitive queries | Timestamp updates, maintain visible changelogs |

| Core Web Vitals | Acts as a tie-breaker between equally helpful pages | Monitor and remediate LCP, INP, and CLS regressions |

| Technical eligibility | Ensures the page is crawlable, indexable, and consolidated | Clean robots directives, sitemaps, and canonicals |

Freshness, Core Web Vitals, and technical eligibility

Even the best-written page can get passed over if it’s technically invisible or outdated. These factors are more like eligibility requirements than ranking boosters, but ignoring them quietly erodes your chances over time.

Freshness matters more for time-sensitive topics. If someone asks about current best practices, pricing, or recent events, an AI engine will lean toward sources with recent timestamps and visible update histories. A simple habit is to timestamp meaningful updates, add brief changelog notes to cornerstone guides, and schedule periodic reviews for pages covering fast-moving topics.

Core Web Vitals measure real-world page experience: loading speed, interactivity, and visual stability. These aren’t the most decisive GEO factors, but they function as tie-breakers. When multiple pages are equally helpful and trustworthy, a page with strong LCP, INP, and CLS scores has an edge over one with poor performance.

Technical eligibility is foundational. If your page is blocked by robots directives, has messy canonicals, or struggles with crawl errors, you’ve reduced your odds before any quality signals even come into play. A weekly audit of indexation coverage, structured data validation, and internal linking health is a worthwhile habit.

Building a practical GEO measurement plan you can run this quarter

The KPIs that actually reflect GEO performance

The right way to measure GEO progress isn’t to chase a third-party content score. It’s to track whether AI engines are actually citing your pages more often, in more relevant contexts, and with positive framing.

The KPIs that reflect real GEO performance include:

- Share of citations in AI answers across Google AI Overviews, Perplexity, and Copilot, segmented by query cluster

- Sentiment distribution attached to brand mentions (positive, neutral, or negative framing)

- Freshness velocity, meaning how recently each key page received a meaningful update

- E-E-A-T completion rate, tracking whether author bios, editorial policies, schema markup, and sameAs links are in place

- Technical health metrics, including Core Web Vitals pass rates, indexation coverage, and structured data validation status

Third-party content score quality score tools from SEO platforms can be useful starting points. They can surface coverage gaps, flag thin sections, and highlight ambiguous terminology worth cleaning up. But treating them as end goals leads to a specific kind of failure: over-optimization. A 2025 analysis from Surfer found only a weak-to-moderate correlation between its content quality score and actual rankings, and the study explicitly cautions against over-relying on the metric. Score optimization without citation validation is working in the dark.

The practical framing is simple. Use tool scores to identify gaps. Make edits based on user value and credible sourcing, not just term frequency. Then measure success by whether AI engines cite your content more often and more positively, not by whether the score went up.

A weekly workflow loop: diagnose, improve, validate

A repeatable weekly process is more valuable than any one-time optimization sprint. Here’s a workflow that closes the loop between diagnosis and improvement without becoming overwhelming:

Step 1: Define query clusters. Group your core topics into meaningful clusters tied to the entities and questions you want to be known for. This gives your monitoring a clear scope.

Step 2: Monitor AI answers. Check Google AI Overviews, Perplexity, and Copilot responses for your target query clusters. Log which URLs and brands are being cited, in what context, and for which intent types (how-to, definition, comparison).

Step 3: Classify sentiment. For each brand or page mention found, tag the framing as positive, neutral, or negative. Track changes over time by engine and by intent category.

Step 4: Audit E-E-A-T and structured data. Verify that your Organization and ProfilePage schema is valid, sameAs links are consistent, author bios are present, and editorial policies are visible.

Step 5: Update pages that lost citations. Expand topical coverage where gaps exist, add primary source citations, clarify entity naming, and document changes with visible timestamps.

Step 6: Track technical health. Review Core Web Vitals data, check for crawl errors or indexation drops, and validate structured data weekly.

This loop turns GEO from a vague aspiration into a measurable, manageable process. The cycle is diagnose, improve, and validate by whether actual citation share improves across engines.

A few pitfalls to avoid along the way. Treating a third-party score as the end goal leads to term stuffing and writing that feels hollow. Ignoring entity clarity and structured data leaves AI systems unable to confidently map your content. Neglecting freshness and technical eligibility quietly reduces your chances in competitive answer sets, even when the content itself is strong.

The reliable path forward is consistent: write genuinely helpful content, make it easy for machines to parse and humans to trust, keep it current, and measure success by citation share and sentiment across AI engines. If a researcher would feel confident citing your page, an AI engine probably will too. That’s the standard worth optimizing for in Generative Engine Optimization.