A growing share of search interactions now starts inside generative AI systems rather than traditional search engines. People type full questions, refine them through follow-ups, and want synthesized answers — not a list of links to sort through. If you work in SEO, this isn’t some distant trend to monitor. It’s changing how content strategy works right now.

The search environment has gotten genuinely complicated. Traditional ranked results, AI-generated summaries, and conversational assistants all coexist, and brands that want to stay visible need to show up across multiple surfaces at once. That’s a much bigger challenge than it was just a few years ago.

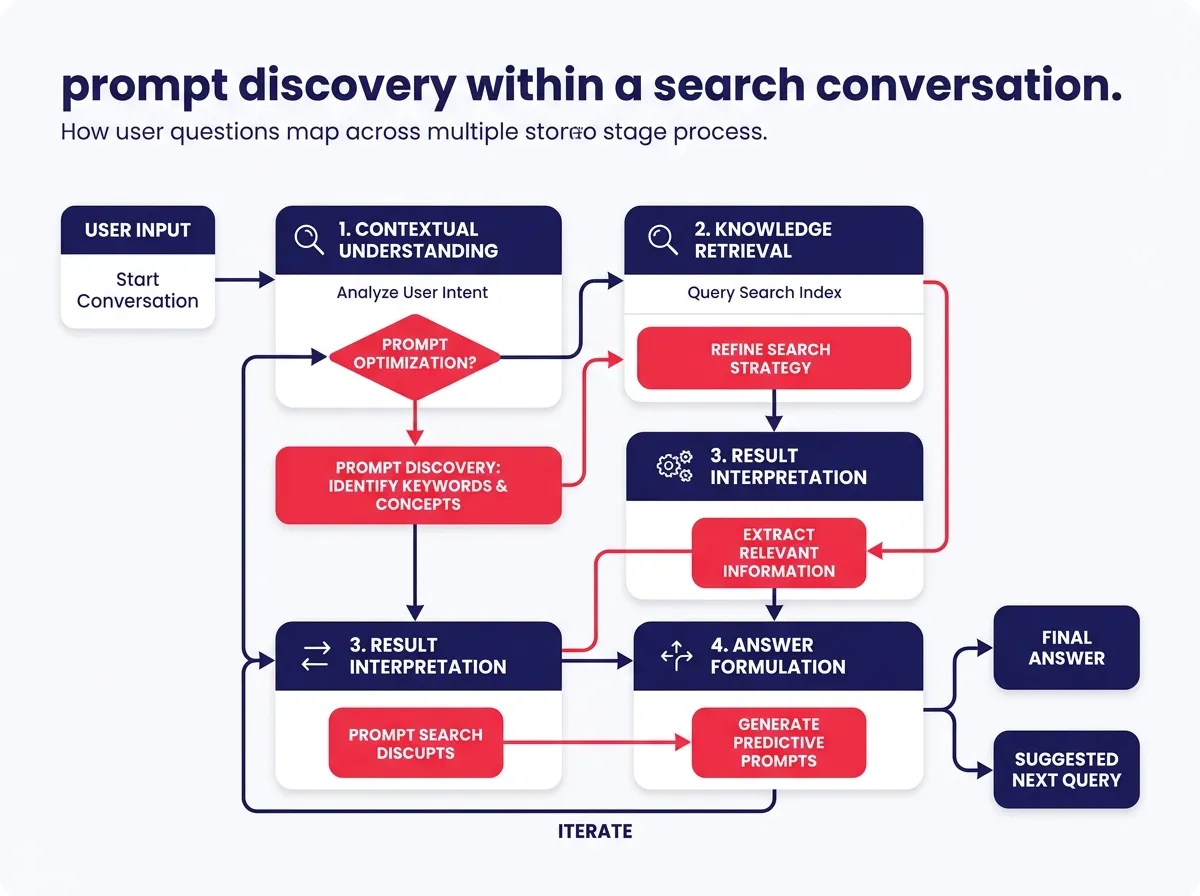

Prompt research is one of the most useful tools for handling that complexity. It’s become a foundational practice for teams trying to bridge conventional SEO with generative engine optimization — GEO — by understanding how users phrase and sequence their questions inside AI-driven search sessions. This guide breaks down what that looks like in practice.

How AI search is changing the way people discover content

From single queries to conversational search sessions

Think about how someone searched five years ago. They opened a search engine, typed something short like “best project management tools,” scanned a list of results, clicked a few links, and moved on. Pretty linear.

AI search doesn’t work that way. Someone might start with “What project management tool is best for a small remote team?” then follow up with “How does Notion compare to Asana for that?” then “What should I look for in the setup process?” Each question builds on the last. The search becomes a conversation, not a lookup.

This matters a lot for content creators and SEO professionals. Generative AI platforms actively pull users toward natural language questions that unfold across multiple follow-up prompts. Users aren’t looking for ten blue links anymore. They want answers that feel tailored to their specific situation — and they’re increasingly getting them.

For content teams, a single well-optimized blog post doesn’t cut it on its own anymore. You have to think about the full arc of how someone explores a topic: discovery, comparison, clarification, decision-making — each happening across separate prompts. That’s a fundamentally different way of thinking about content coverage.

What this means for traditional SEO visibility

Traditional SEO visibility leaned heavily on keyword density, meta tags, backlink profiles, and page authority scores. Those things still matter. But they’re not the whole picture anymore.

Generative systems pick sources they read as credible and contextually relevant to whatever prompt the user entered. So visibility now depends on how well your content actually addresses the questions people ask inside AI tools — not just whether a keyword appears enough times on the page.

Here’s a concrete way to think about it: if a user asks an AI assistant a specific question about your area of expertise and your content doesn’t answer that question in plain language, the AI probably won’t surface your work as a reference. That’s what GEO is about — optimizing for discoverability inside generative systems, not just traditional search engines.

The brands building advantages right now are the ones treating AI search as its own surface with its own rules, rather than assuming traditional SEO tactics will carry over automatically. Some will. A lot won’t.

What is prompt research and how does it work?

Prompt discovery: finding the questions users actually ask

Keyword research has always been about understanding what people are searching for. Prompt research extends that idea into AI-driven environments, where questions are longer, more specific, and often part of a multi-step conversation rather than a standalone query.

At its core, prompt research means identifying recurring question patterns across generative platforms. Instead of pulling search volume data from a keyword tool, you’re drawing on sources like AI chat logs, community forums like Reddit and Quora, customer support tickets, sales call transcripts, and social media discussions. These sources show how people naturally phrase their questions before autocomplete or keyword suggestions have shaped them.

The goal is to map the full arc of how users explore a topic. A useful prompt discovery process looks at questions across different stages of curiosity:

- Entry-level questions: What is this? How does it work?

- Comparative questions: How does this compare to that? Which is better for my situation?

- Clarifying questions: What exactly does that mean? Can you explain it differently?

- Action-oriented questions: How do I get started? What should I do first?

When you map these out, patterns emerge. Certain questions come up repeatedly, often in sequence. That sequencing matters — it tells you what someone needs before and after any given piece of content. An AI prompt isn’t an isolated search; it’s usually one step in a longer exploration.

Prompt clustering and mapping: turning questions into content structure

Once you have a solid collection of raw questions, the next step is grouping them. Prompt clustering means organizing questions by intent and theme so you can see the full topic landscape rather than a scattered pile of phrases.

A practical way to think about clusters is by intent type:

| Cluster type | What it covers | Example prompt |

|---|---|---|

| Informational | What, why, how it works | “What is generative engine optimization?” |

| Comparative | Differences, pros and cons | “How does GEO differ from traditional SEO?” |

| Transactional | Actions, next steps | “How do I start optimizing content for AI search?” |

| Strategic | Planning, big picture | “What content strategy works best for AI visibility?” |

Grouping prompts this way lets you build content structures that address a complete topic rather than chasing isolated keywords. Instead of one article on a broad topic and hoping it ranks, you’re creating a web of content that mirrors the actual conversation a user has with an AI tool — question by question.

Prompt mapping takes this further. After clustering, you assign each group to a specific piece of content or flag it as a gap that needs to be filled. That turns your editorial calendar into something with a clear purpose tied directly to how real users explore your topic.

This works for both SEO and GEO because it focuses on depth and relevance — not surface-level optimization tricks that may not survive the next algorithm update.

Building an SEO and GEO content strategy around prompt research

Structuring content for both search engines and generative systems

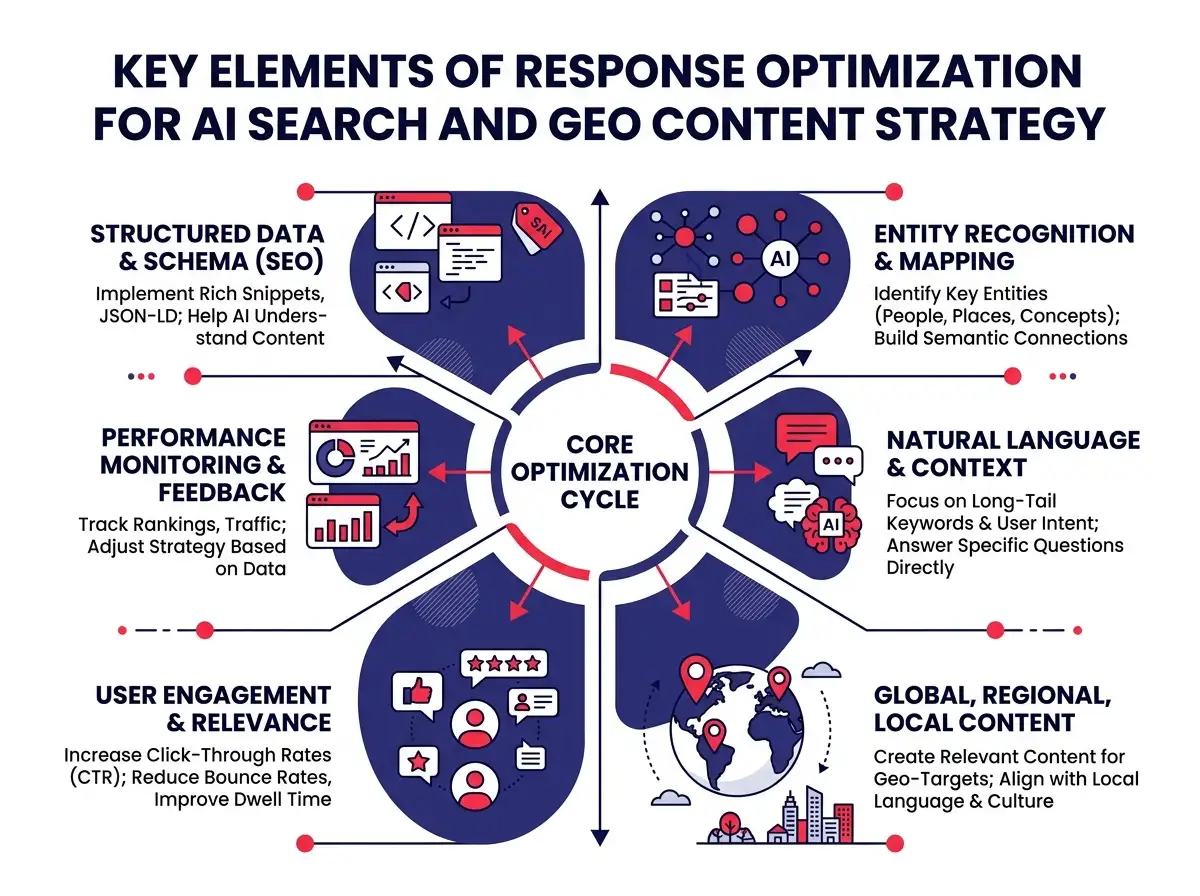

Once your prompt clusters are mapped, the challenge becomes building content that actually performs across traditional search results and AI-generated answers. That requires a different approach than most teams are used to.

A few elements tend to matter most:

Concise section-level explanations: Each section of your content should be able to stand alone as an answer. AI systems often pull specific paragraphs rather than entire articles, so every major section needs to be clear enough to make sense in isolation.

FAQ blocks that mirror real prompts: Including a frequently asked questions section that uses the exact phrasing people actually use in AI searches makes it much easier for generative systems to match your content to specific prompts.

Clear entity references: Mentioning specific tools, brands, concepts, and named frameworks helps AI systems understand what your content is about and when it’s worth surfacing.

Supporting data and citations: Content backed by real data tends to be treated as more credible by generative systems. Specific statistics, studies, and concrete examples give your content more weight than vague generalizations.

Topical authority also matters more than it used to. One strong article is less effective than a cluster of well-connected content that covers a topic from multiple angles. When a generative system sees that your site has addressed a topic thoroughly across many related pieces, it’s more likely to draw from your work when a relevant AI prompt comes through.

Worth saying plainly: structuring content for AI visibility doesn’t mean abandoning your human reader. The qualities that make content useful to a generative system — clarity, depth, direct answers — are the same qualities that make it useful to an actual person. The two goals are more aligned than they might seem at first.

Risks, measurement challenges, and keeping the human reader first

No content strategy comes without trade-offs, and this one has a few real ones.

Limited algorithm transparency is a persistent problem. Traditional search engines at least publish guidelines and update notes. Generative AI systems are largely black boxes. You often can’t tell why one piece of content gets cited and another doesn’t, which makes it hard to iterate quickly based on performance data. You’re doing some amount of informed guessing, and it’s worth being honest about that.

Inconsistent attribution from AI referral traffic is another practical issue. When an AI assistant answers a question using your content, the user often doesn’t click through to your site. That means your traffic numbers may not reflect how often your content is actually being used. Teams need to look beyond click-through rates and pay attention to brand mentions, direct traffic patterns, and search impression data as supplementary signals.

The risk of optimizing away the reader is also real. Some teams get so focused on structuring content for machine readability that the writing becomes hollow — technically complete, emotionally empty. That’s counterproductive. Content that reads like a checklist might satisfy a few surface-level criteria, but it won’t build the kind of trust that sustains long-term performance in either SEO or GEO.

The most practical starting point is auditing your existing content against your prompt clusters. Look at what you already have and identify the gaps. Which questions do users commonly ask inside AI tools that your current content doesn’t directly address? Those gaps are your highest-priority opportunities.

Teams that make this audit a regular part of their workflow — not a one-time project — will be in a much better position as AI search continues to shift. The goal isn’t to game any single system. It’s to be genuinely useful to people asking questions, whatever format they’re using to ask them.

Prompt research isn’t a replacement for traditional SEO. It’s an extension of it. Understanding your audience, building credible content, developing topical depth — all of that still applies. What changes is the surface you’re optimizing for and the format of the questions you’re trying to answer.

As AI-driven search sessions become more common across industries, the teams with a strong foundation in both SEO and GEO — grounded in real prompt research — will be the ones showing up where it matters.